AI Engineering.Turning AI prototypes into production systems.

AI engineering is the discipline that sits between "the model can do this" and "this runs in production for paying users." It is mostly the un-glamorous parts — tool registries, exit conditions, retries, eval suites, observability — that decide whether your agent works on day 30, not just day one.

The patterns I broke in 2025 and what replaced them

Supervisor patterns gave way to handoffs. Inline tool descriptions gave way to externalised, per-tool selection criteria. "Use this when…" beat "this function returns…" every time. JSON mode replaced bespoke parsers. RAG-by-default got replaced by a measure-then-decide framework. These are the moves that separate engineering from improv.

Tool design as a first-class concern

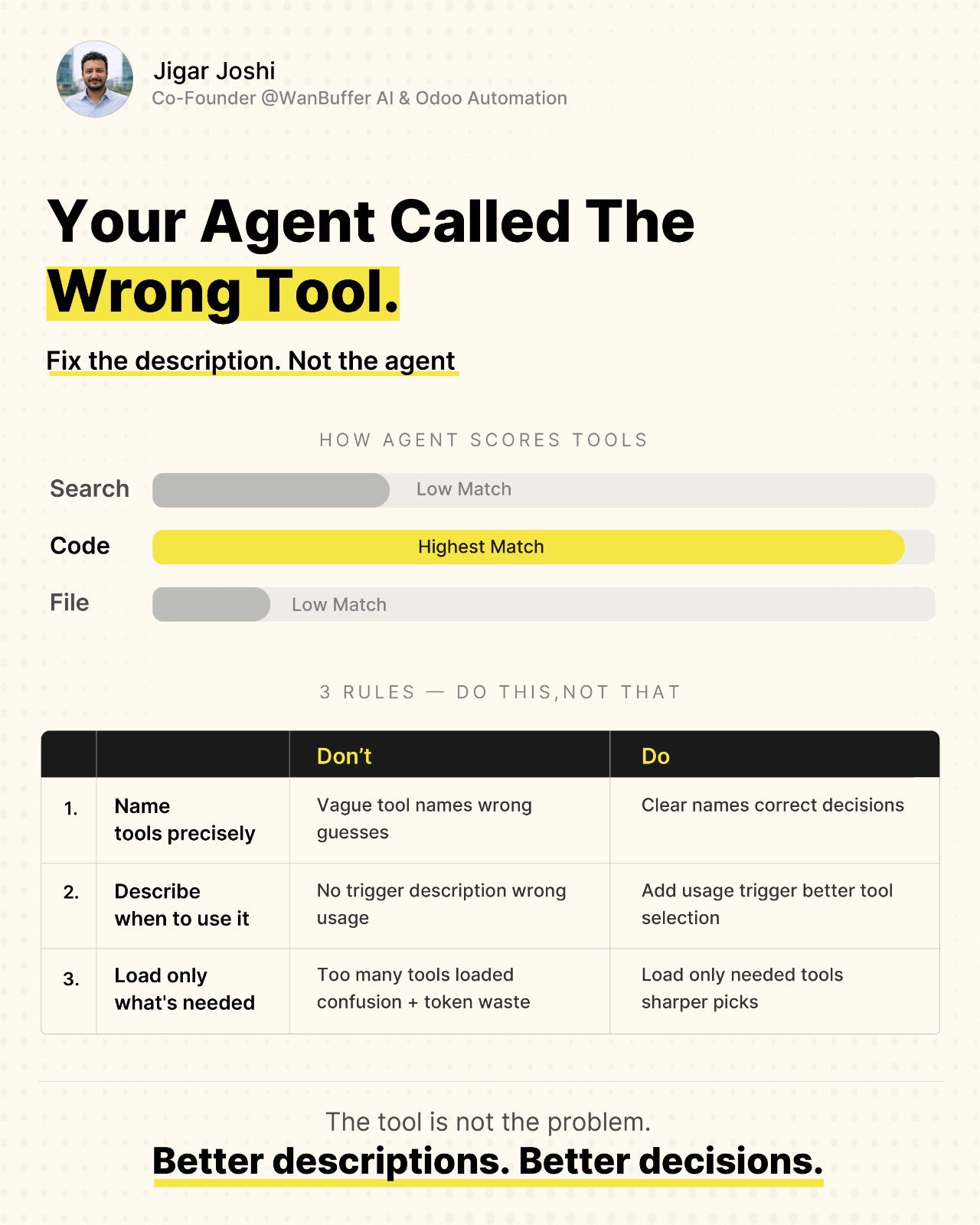

When an agent picks the wrong tool, the registry is broken — not the agent. Three rules: name tools precisely, describe when to use each one, load only what the task needs. Anthropic's tool-use telemetry finally puts numbers on what changes accuracy.

Cost discipline is design, not optimisation

The cheapest LLM call is the one you do not make. Pre-agentic data fetching, prompt caching, and registry pruning compound into 30–60% cost reductions on real workflows — before you touch a prompt. Cost is not a post-launch concern; it is a design constraint from day one.

Deep dives on AI Engineering

Tool descriptions are prompts. Fix the registry, not the agent.

When an agent picks the wrong tool, the registry is broken — not the agent. Three rules I now apply before debugging anything in a multi-tool system: precise names, "when to use" triggers, and a curated load list. Anthropic's new tool-selection telemetry finally puts numbers on what changes accuracy.

The cheapest LLM call is the one you do not make — GitHub's 19-62% token cut, decoded

GitHub published an instrumented analysis of their agentic CI workflows and reported 19-62% token-cost reductions. The savings are the headline. The technique — pre-agentic data fetching and tool-registry hygiene — is the story most teams will miss.

Claude Opus 4.7's 1M context: when to RAG and when to just stuff it

A million tokens reliably is real now, but it does not retire RAG — it changes the calculus. Cost, latency, recency, and the prompt-cache angle nobody is talking about.

Why I am replacing supervisor patterns with handoffs

Supervisors looked clean on paper and shipped slow in production. Handoffs read messier in the code but recover better when an agent loses the plot. Two real systems and where supervisors still earn their keep.

Prompt caching is not optional anymore — measuring a 47% cost drop

A walkthrough from a client engagement: identifying stable prefixes, restructuring the system prompt for cacheability, and the telemetry that proved caching was actually working.

Tool descriptions are prompts. Stop treating them like docstrings

A docstring tells a developer what a function does. A tool description tells a model when to call it. Different audience, different writing. Six concrete edits that lifted tool-call accuracy.

The agent observability stack we ship to every client

Traces, spans, evals, cost-per-completed-task, and the one dashboard panel that catches 80% of regressions. Vendor-agnostic — covers Langfuse, Honeycomb, and rolling your own.

Three patterns I broke in 2025 — and what I do instead now

Self-correction loops without budgets, single-agent solutions to multi-domain problems, and using JSON mode to force structure I should have built into the schema. An honest review.

Eval datasets: stop testing your agents on the happy path

If your eval set is the demos you showed the client, you are testing the wrong thing. How we build evals from production failures and the minimum viable suite to ship.

I was wrong about JSON mode. Here is what changed my mind

For two years I told teams to avoid forced JSON outputs and use structured tool calls. That was right then and partially wrong now — schema enforcement got better, latency penalties got smaller.

Why your agent keeps failing after 3 steps

The exit condition problem nobody talks about. Most agents are built for the happy path — where every tool call succeeds and the task completes cleanly. Real production agents are different.

The one rule for designing agent tools that actually work

One tool, one purpose. Every tool that does two things will fail you on the third call. I have watched this pattern fail in every team I have trained — and the fix is the same refactor.

RAG vs CAG: how to actually decide

A decision framework from real implementations. RAG retrieves. CAG stores in cache. Knowing which to use — and when to combine both — determines whether your agent finds the right answer at the right cost.

Visual breakdowns on AI Engineering

Latest in AI Engineering

MCP remote-server registry crosses 500 listed servers — a curated production-ready tier emerges

GitHub cuts agentic CI workflow costs 19-62% by pruning tools and moving data-fetch outside the LLM loop

Claude Opus 4.7 ships with 1M-token context window in production

Claude Code adds project memory — persistent context that survives across CLI sessions

Anthropic publishes "Effective Tool Design" — official guidance for production agents

Sonnet 4.6 update: cheaper tokens, sharper tool calls, fewer retry loops

Haiku 4.5 in production — small-model speed, surprising tool-use chops

Cursor 1.0 stabilises background agents and ships a review-and-merge workflow

How AI Engineering ships in our engagements

The pages below are the buyer-focused, conversion-grade versions of this topic — deliverables, methodology, ROI, security considerations, and CTAs to scope a real engagement.

Agentic AI Consulting

Designed, built, and handed off — production agentic systems for enterprise teams.

Explore the Agentic AI Consulting solutionMCP Integration

Custom Model Context Protocol servers that turn your systems into agent tools.

Explore the MCP Integration solutionAI Guardrails

Multi-layer safety, policy, and audit controls for agents in regulated environments.

Explore the AI Guardrails solutionAI Systems Engineering Training

Eight-day corporate training programs that take dev teams from AI-assisted coding to production agentic systems.

Explore the AI Systems Engineering Training solutionEnterprise AI Architecture

Reference architectures for organisations standing up an AI platform — not one agent, but the foundation for many.

Explore the Enterprise AI Architecture solutionAI Observability

Tracing, eval, cache-hit telemetry, and cost attribution for production agents.

Explore the AI Observability solutionMulti-Agent Workflows

Supervisor + handoff orchestration for portfolios of agents that need to cooperate without arguing.

Explore the Multi-Agent Workflows solutionAI Automation for Enterprises

Operational agents that replace manual workflows — triage, support, ERP integration, content pipelines.

Explore the AI Automation for Enterprises solutionAI Engineering — the questions teams actually ask

Train your team on AI Engineering

Two tracks — one for developers who build agents, one for business teams who use them. Customised to your stack, hands-on from session 1.

See AI Engineering training tracksShip your first AI Engineering system

Architecture design, production implementation on Claude API and MCP, full observability, and a real handoff. Working agents, not slides.

Explore AI Engineering consultingAdjacent topics to read next

Agentic AI

Designing, building, and shipping production agents.

Model Context Protocol (MCP)

The open protocol that gives agents tools.

Multi-Agent Systems

Orchestrating many agents without losing the plot.

Claude API

Building production agents on Anthropic's Claude.

AI Observability

Tracing, eval, and telemetry for production agents.